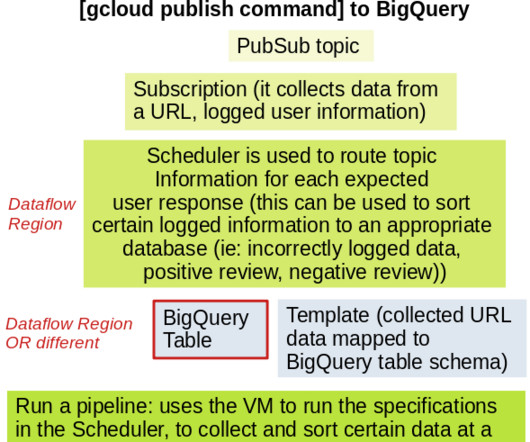

Streaming data to a BigQuery table with GCP

Mlearning.ai

AUGUST 10, 2023

BigQuery is very useful in terms of having a centralized location of structured data; ingestion on GCP is wonderful using the ‘bq load’ command line tool for uploading local .csv PubSub and Dataflow are solutions for storing newly created data from website/application activity, in either BigQuery or Google Cloud Storage.

Let's personalize your content