Introduction to Large Language Models (LLMs): An Overview of BERT, GPT, and Other Popular Models

John Snow Labs

JUNE 27, 2023

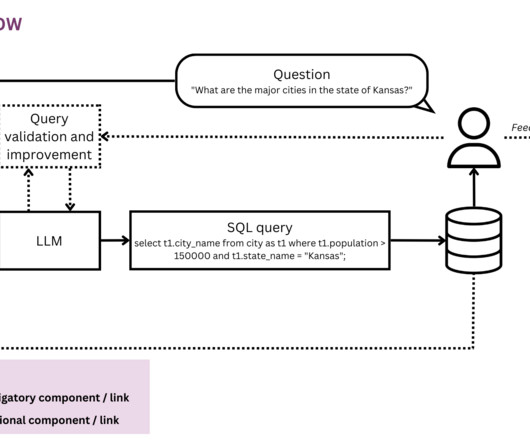

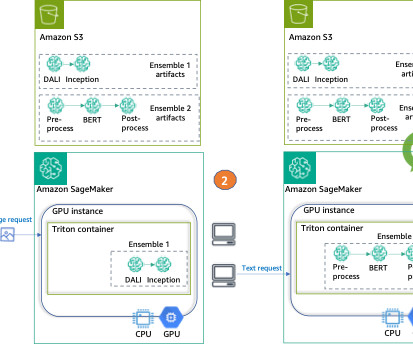

Are you curious about the groundbreaking advancements in Natural Language Processing (NLP)? Prepare to be amazed as we delve into the world of Large Language Models (LLMs) – the driving force behind NLP’s remarkable progress. and GPT-4, marked a significant advancement in the field of large language models.

Let's personalize your content