Machine unlearning: Researchers make AI models ‘forget’ data

AI News

DECEMBER 10, 2024

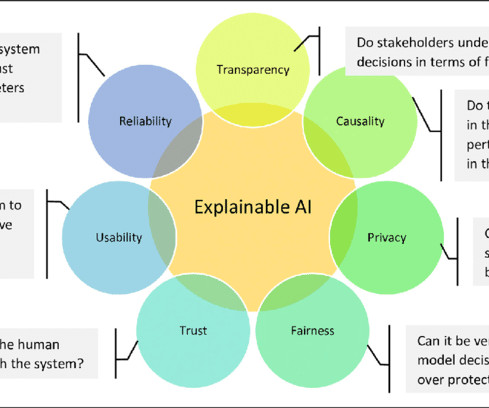

Researchers from the Tokyo University of Science (TUS) have developed a method to enable large-scale AI models to selectively “forget” specific classes of data. Progress in AI has provided tools capable of revolutionising various domains, from healthcare to autonomous driving.

Let's personalize your content