Who Is Responsible If Healthcare AI Fails?

Unite.AI

JUNE 26, 2023

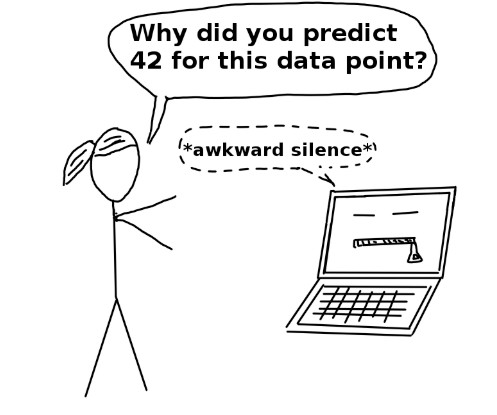

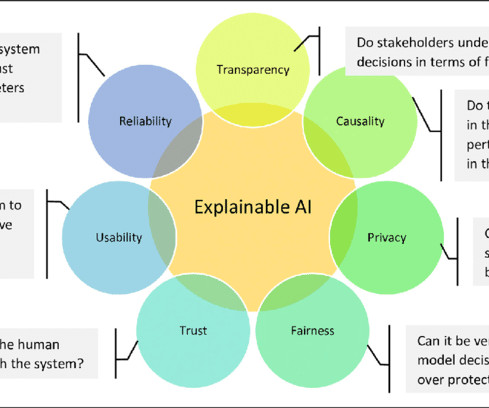

At the root of AI mistakes like these is the nature of AI models themselves. Most AI today use “black box” logic, meaning no one can see how the algorithm makes decisions. Black box AI lack transparency, leading to risks like logic bias , discrimination and inaccurate results.

Let's personalize your content