The Future of Serverless Inference for Large Language Models

Unite.AI

JANUARY 26, 2024

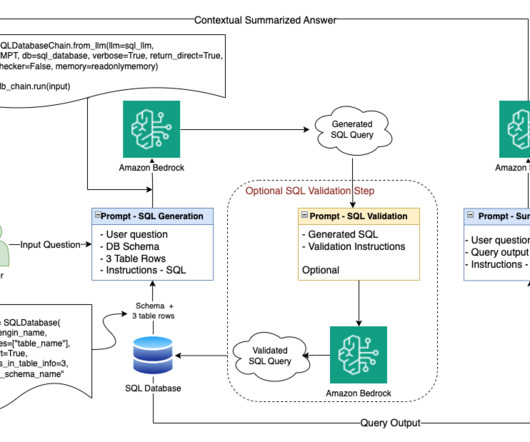

On complementary side wrt to the software architect side; to enable faster deployment of LLMs researchers have proposed serverless inference systems. Prominent implementations include Amazon SageMaker, Microsoft Azure ML, and open-source options like KServe.

Let's personalize your content