Go from Engineer to ML Engineer with Declarative ML

MAY 31, 2023

Learn how to easily build any AI model and customize your own LLM in just a few lines of code with a declarative approach to machine learning.

This site uses cookies to improve your experience. To help us insure we adhere to various privacy regulations, please select your country/region of residence. If you do not select a country, we will assume you are from the United States. Select your Cookie Settings or view our Privacy Policy and Terms of Use.

Cookies and similar technologies are used on this website for proper function of the website, for tracking performance analytics and for marketing purposes. We and some of our third-party providers may use cookie data for various purposes. Please review the cookie settings below and choose your preference.

Used for the proper function of the website

Used for monitoring website traffic and interactions

Cookies and similar technologies are used on this website for proper function of the website, for tracking performance analytics and for marketing purposes. We and some of our third-party providers may use cookie data for various purposes. Please review the cookie settings below and choose your preference.

MAY 31, 2023

Learn how to easily build any AI model and customize your own LLM in just a few lines of code with a declarative approach to machine learning.

AWS Machine Learning Blog

JANUARY 28, 2025

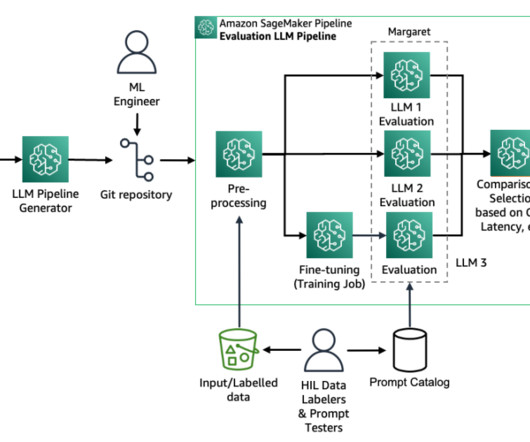

Evaluating large language models (LLMs) is crucial as LLM-based systems become increasingly powerful and relevant in our society. Rigorous testing allows us to understand an LLMs capabilities, limitations, and potential biases, and provide actionable feedback to identify and mitigate risk.

This site is protected by reCAPTCHA and the Google Privacy Policy and Terms of Service apply.

How to Achieve High-Accuracy Results When Using LLMs

Relevance, Reach, Revenue: How to Turn Marketing Trends From Hype to High-Impact

AWS Machine Learning Blog

DECEMBER 9, 2024

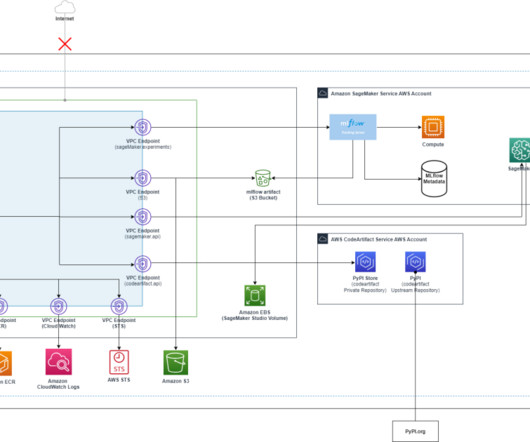

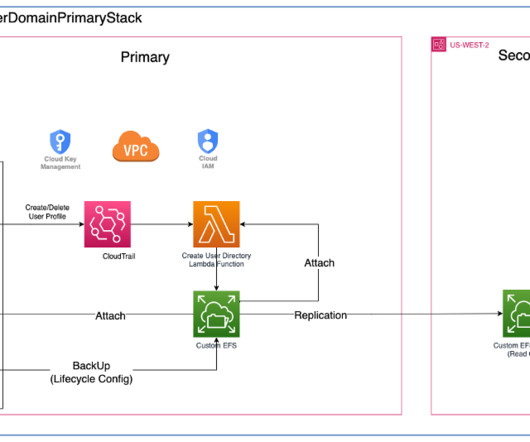

With access to a wide range of generative AI foundation models (FM) and the ability to build and train their own machine learning (ML) models in Amazon SageMaker , users want a seamless and secure way to experiment with and select the models that deliver the most value for their business.

DECEMBER 12, 2023

Whether you're a seasoned ML engineer or a new LLM developer, these tools will help you get more productive and accelerate the development and deployment of your AI projects.

AWS Machine Learning Blog

FEBRUARY 12, 2025

Researchers developed Medusa , a framework to speed up LLM inference by adding extra heads to predict multiple tokens simultaneously. This post demonstrates how to use Medusa-1, the first version of the framework, to speed up an LLM by fine-tuning it on Amazon SageMaker AI and confirms the speed up with deployment and a simple load test.

Unite.AI

FEBRUARY 11, 2025

” Transforming AI Performance Across Industries Future AGI is already delivering impactful results across industries: A Series E sales-tech company used Future AGIs LLM Experimentation Hub to achieve 99% accuracy in its agentic pipeline, compressing weeks of work into just hours.

AWS Machine Learning Blog

FEBRUARY 21, 2025

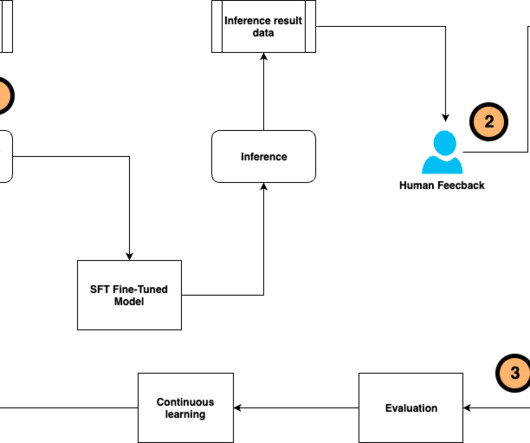

Fine-tuning a pre-trained large language model (LLM) allows users to customize the model to perform better on domain-specific tasks or align more closely with human preferences. You can use supervised fine-tuning (SFT) and instruction tuning to train the LLM to perform better on specific tasks using human-annotated datasets and instructions.

AWS Machine Learning Blog

OCTOBER 22, 2024

Amazon SageMaker is a cloud-based machine learning (ML) platform within the AWS ecosystem that offers developers a seamless and convenient way to build, train, and deploy ML models. He focuses on architecting and implementing large-scale generative AI and classic ML pipeline solutions.

AWS Machine Learning Blog

APRIL 2, 2025

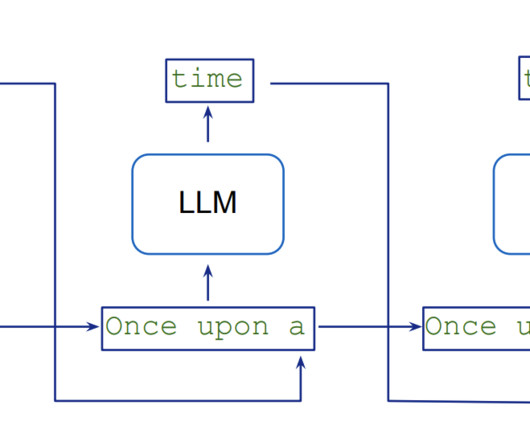

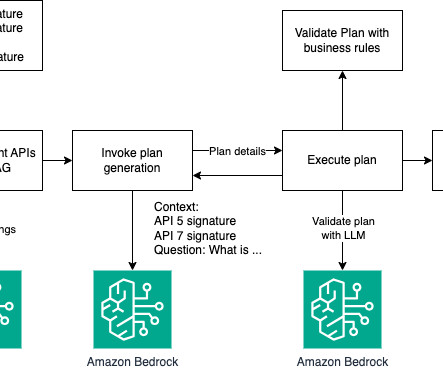

The goal of this blog post is to show you how a large language model (LLM) can be used to perform tasks that require multi-step dynamic reasoning and execution. Fig 1: Simple execution flow solution overview In a more complex scheme, you can add multiple layers of validation and provide relevant APIs to increase the success rate of the LLM.

AWS Machine Learning Blog

OCTOBER 16, 2024

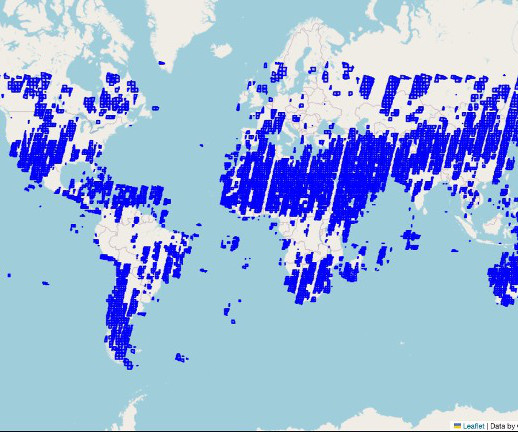

Amazon SageMaker supports geospatial machine learning (ML) capabilities, allowing data scientists and ML engineers to build, train, and deploy ML models using geospatial data. SageMaker Processing provisions cluster resources for you to run city-, country-, or continent-scale geospatial ML workloads.

AWS Machine Learning Blog

JULY 24, 2024

Large language models (LLMs) have achieved remarkable success in various natural language processing (NLP) tasks, but they may not always generalize well to specific domains or tasks. You may need to customize an LLM to adapt to your unique use case, improving its performance on your specific dataset or task.

AWS Machine Learning Blog

MAY 9, 2024

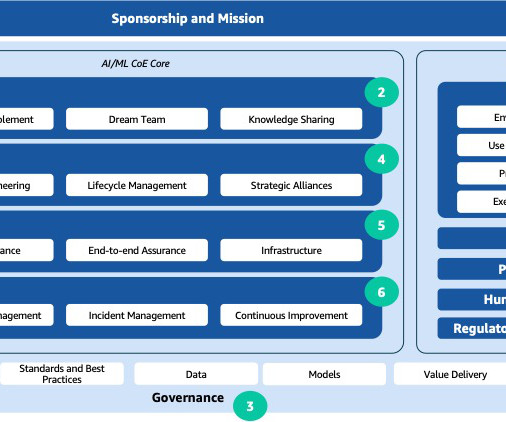

The rapid advancements in artificial intelligence and machine learning (AI/ML) have made these technologies a transformative force across industries. An effective approach that addresses a wide range of observed issues is the establishment of an AI/ML center of excellence (CoE). What is an AI/ML CoE?

ODSC - Open Data Science

APRIL 2, 2025

Whether an engineer is cleaning a dataset, building a recommendation engine, or troubleshooting LLM behavior, these cognitive skills form the bedrock of effective AI development. Engineers who can visualize data, explain outputs, and align their work with business objectives are consistently more valuable to theirteams.

Marktechpost

JUNE 14, 2024

Recently, Yandex has introduced a new solution: YaFSDP, an open-source tool that promises to revolutionize LLM training by significantly reducing GPU resource consumption and training time. ML engineers can leverage this tool to enhance the efficiency of their LLM training processes. Check out the GitHub Page.

AWS Machine Learning Blog

FEBRUARY 11, 2025

Get started with SageMaker JumpStart SageMaker JumpStart is a machine learning (ML) hub that can help accelerate your ML journey. Marc Karp is an ML Architect with the Amazon SageMaker Service team. He focuses on helping customers design, deploy, and manage ML workloads at scale.

AWS Machine Learning Blog

MARCH 18, 2025

This transcription then serves as the input for a powerful LLM, which draws upon its vast knowledge base to provide personalized, context-aware responses tailored to your specific situation. LLM integration The preprocessed text is fed into a powerful LLM tailored for the healthcare and life sciences (HCLS) domain.

ODSC - Open Data Science

MARCH 10, 2025

Building Multimodal AI Agents: Agentic RAG with Vision-Language Models Suman Debnath, Principal AI/ML Advocate at Amazon WebServices Building a truly intelligent AI assistant requires overcoming the limitations of native Retrieval-Augmented Generation (RAG) models, especially when handling diverse data types like text, tables, and images.

Snorkel AI

MARCH 20, 2025

GenAI evaluation with SME-evaluator agreement AI/ML engineers develop specialized evaluators with ground truth. Lets consider an LLM-as-a-Judge (LLMAJ) which checks to see if an AI assistant has repeated itself. Its far more likely that the AI/ML engineer needs to go back and continue iterating on the prompt.

ODSC - Open Data Science

APRIL 1, 2025

Building Multimodal AI Agents: Agentic RAG with Image, Text, and Audio Inputs Suman Debnath, Principal AI/ML Advocate at Amazon Web Services Discover the transformative potential of Multimodal Agentic RAG systems that integrate image, audio, and text to power intelligent, real-world applications.

Marktechpost

DECEMBER 1, 2024

It supports multiple LLM providers, making it compatible with a wide array of hosted and local models, including OpenAI’s models, Anthropic’s Claude, and Google Gemini. This combination of technical depth and usability lowers the barrier for data scientists and ML engineers to generate synthetic data efficiently.

Towards AI

JANUARY 30, 2024

The Top Secret Behind Effective LLM Training in 2024 Large-scale unsupervised language models (LMs) have shown remarkable capabilities in understanding and generating human-like text. ML Engineers(LLM), Tech Enthusiasts, VCs, etc. Anybody previously acquainted with ML terms should be able to follow along.

Marktechpost

MAY 30, 2024

Introduction to AI and Machine Learning on Google Cloud This course introduces Google Cloud’s AI and ML offerings for predictive and generative projects, covering technologies, products, and tools across the data-to-AI lifecycle. It includes labs on feature engineering with BigQuery ML, Keras, and TensorFlow.

Towards AI

FEBRUARY 18, 2025

AI agents, on the other hand, hold a lot of promise but are still constrained by the reliability of LLM reasoning. From an engineering perspective, the core challenge for both lies in improving accuracy and reliability to meet real-world business requirements. They also inspired a bunch of new potentials for ML engineers.

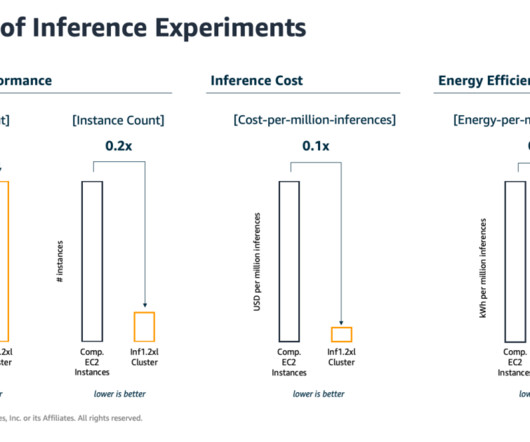

JUNE 20, 2023

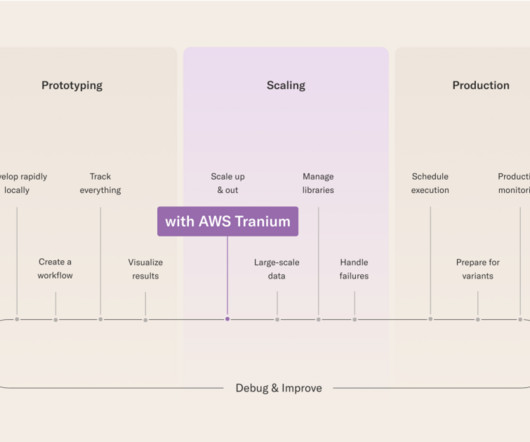

Machine learning (ML) engineers have traditionally focused on striking a balance between model training and deployment cost vs. performance. This is important because training ML models and then using the trained models to make predictions (inference) can be highly energy-intensive tasks.

AWS Machine Learning Blog

FEBRUARY 26, 2024

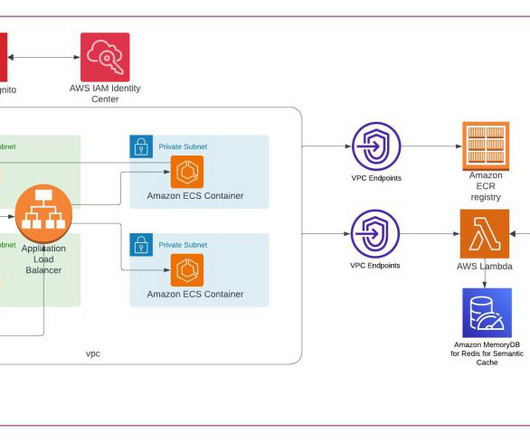

Our proposed architecture provides a scalable and customizable solution for online LLM monitoring, enabling teams to tailor your monitoring solution to your specific use cases and requirements. We suggest that each module take incoming inference requests to the LLM, passing prompt and completion (response) pairs to metric compute modules.

AWS Machine Learning Blog

MARCH 14, 2024

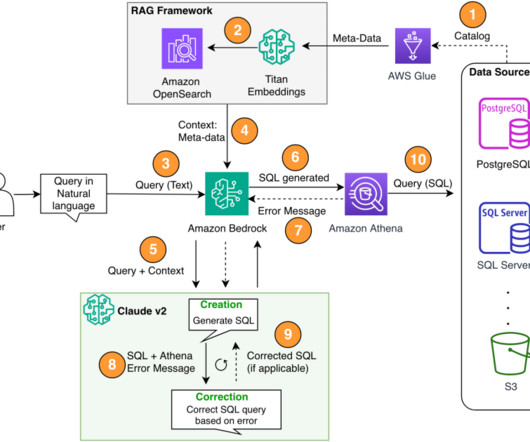

We formulated a text-to-SQL approach where by a user’s natural language query is converted to a SQL statement using an LLM. This data is again provided to an LLM, which is asked to answer the user’s query given the data. The relevant information is then provided to the LLM for final response generation.

Snorkel AI

MARCH 18, 2025

However, when evaluations provide deep insights into the behavior of GenAI applications, AI/ML engineers can quickly identify what improvements are needed and correctly determine the best way to implement them resulting in a much faster, and far more efficient, GenAI development process.

Unite.AI

FEBRUARY 28, 2024

Attackers may attempt to fine-tune surrogate models using queries to the target LLM to reverse-engineer its knowledge. Adversaries can also attempt to breach cloud environments hosting LLMs to sabotage operations or exfiltrate data. Stolen models also create additional attack surface for adversaries to mount further attacks.

AWS Machine Learning Blog

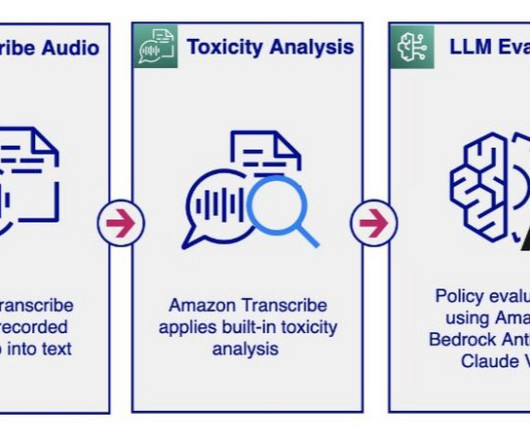

MARCH 13, 2024

The LLM analysis provides a violation result (Y or N) and explains the rationale behind the model’s decision regarding policy violation. The audio moderation workflow activates the LLM’s policy evaluation only when the toxicity analysis exceeds a set threshold. LLMs, in contrast, offer a high degree of flexibility.

The MLOps Blog

JULY 4, 2024

They enable efficient context retrieval or dynamic few-shot prompting to improve the factual accuracy of LLM-generated responses. Use re-ranking or contextual compression techniques to ensure only the most relevant information is provided to the LLM, improving response accuracy and reducing cost.

AWS Machine Learning Blog

MAY 13, 2024

In part 1 of this blog series, we discussed how a large language model (LLM) available on Amazon SageMaker JumpStart can be fine-tuned for the task of radiology report impression generation. We also explore the utility of the RAG prompt engineering technique as it applies to the task of summarization.

Snorkel AI

OCTOBER 27, 2023

Snorkel AI held its Enterprise LLM Virtual Summit on October 26, 2023, drawing an engaged crowd of more than 1,000 attendees across three hours and eight sessions that featured 11 speakers. How to fine-tune and customize LLMs Hoang Tran, ML Engineer at Snorkel AI, outlined how he saw LLMs creating value in enterprise environments.

AWS Machine Learning Blog

AUGUST 22, 2024

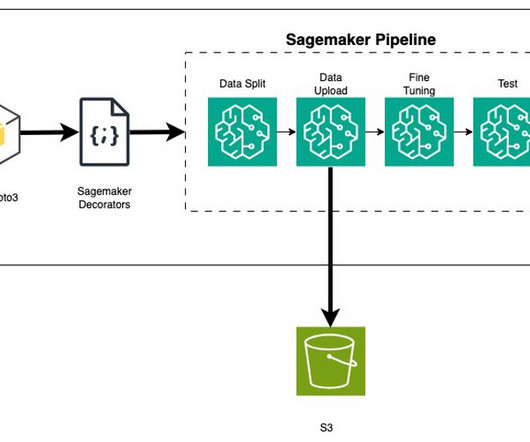

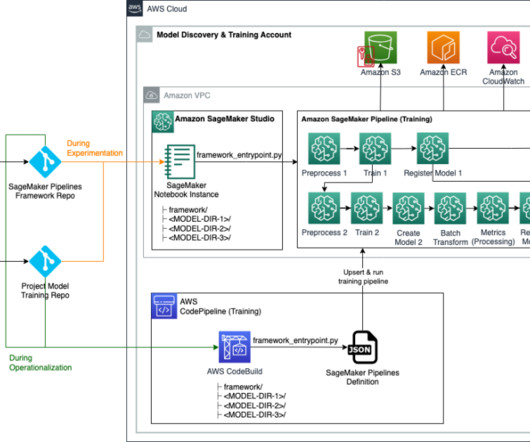

You can use Amazon SageMaker Model Building Pipelines to collaborate between multiple AI/ML teams. SageMaker Pipelines You can use SageMaker Pipelines to define and orchestrate the various steps involved in the ML lifecycle, such as data preprocessing, model training, evaluation, and deployment. We use Python to do this.

AWS Machine Learning Blog

JANUARY 10, 2024

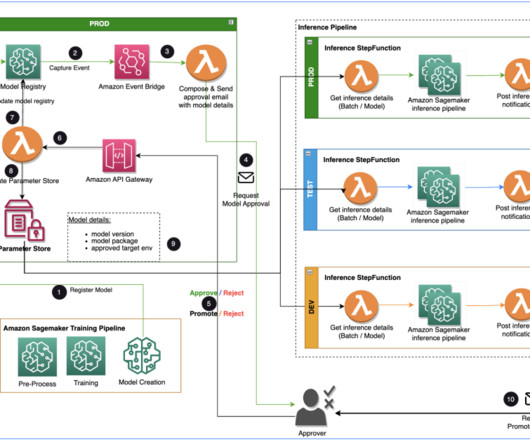

Specialist Data Engineering at Merck, and Prabakaran Mathaiyan, Sr. ML Engineer at Tiger Analytics. The large machine learning (ML) model development lifecycle requires a scalable model release process similar to that of software development. The input to the training pipeline is the features dataset.

Marktechpost

OCTOBER 11, 2023

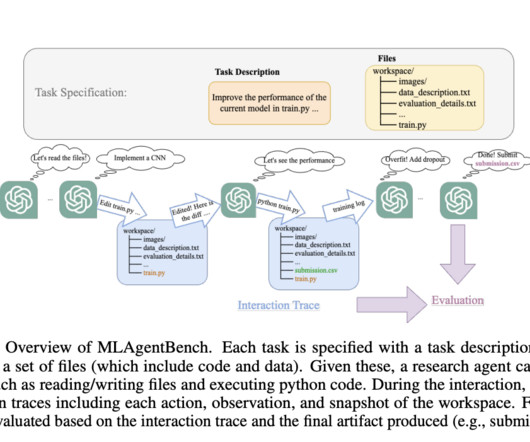

The team started with a collection of 15 ML engineering projects spanning various fields, with experiments that are quick and cheap to run. At a high level, they simply ask the LLMs to take the next action, using a prompt that is automatically produced based on the available information about the task and previous steps.

Towards AI

MAY 30, 2024

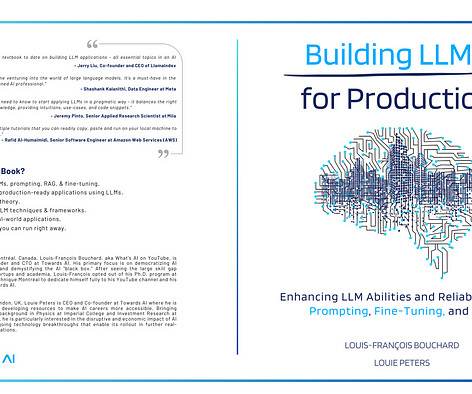

Building LLMs for Production: Enhancing LLM Abilities and Reliability with Prompting, Fine-Tuning, and RAG” is now available on Amazon! The application topics include prompting, RAG, agents, fine-tuning, and deployment — all essential topics in an AI Engineer’s toolkit.” The defacto manual for AI Engineering.

AWS Machine Learning Blog

SEPTEMBER 1, 2023

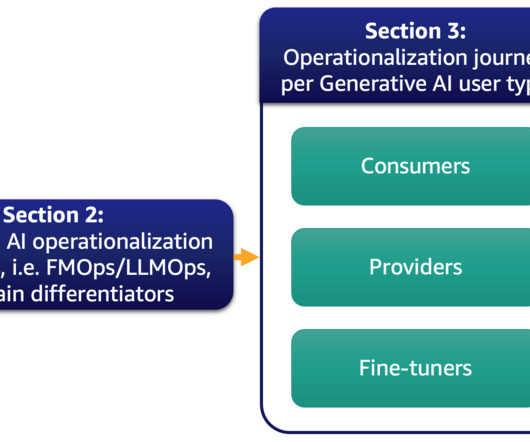

Furthermore, we deep dive on the most common generative AI use case of text-to-text applications and LLM operations (LLMOps), a subset of FMOps. The ML consumers are other business stakeholders who use the inference results (predictions) to drive decisions. The following figure illustrates the topics we discuss.

AWS Machine Learning Blog

FEBRUARY 28, 2024

on Amazon Bedrock as our LLM. The multi-step component allows the LLM to correct the generated SQL query for accuracy. We use Athena error messages to enrich our prompt for the LLM for more accurate and effective corrections in the generated SQL. About the Authors Sanjeeb Panda is a Data and ML engineer at Amazon.

AWS Machine Learning Blog

OCTOBER 4, 2023

In this post, we walk you through deploying a Falcon large language model (LLM) using Amazon SageMaker JumpStart and using the model to summarize long documents with LangChain and Python. SageMaker is a HIPAA-eligible managed service that provides tools that enable data scientists, ML engineers, and business analysts to innovate with ML.

ODSC - Open Data Science

FEBRUARY 13, 2025

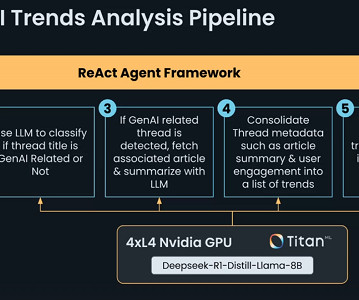

The AI agent classified and summarized GenAI-related content from Reddit, using a structured pipeline with utility functions for API interactions, web scraping, and LLM-based reasoning. He demonstrated practical AI-powered workflows for engineers, including essay generation, research retrieval, and iterative refinement.

AWS Machine Learning Blog

NOVEMBER 30, 2023

Code Editor is based on Code-OSS , Visual Studio Code Open Source, and provides access to the familiar environment and tools of the popular IDE that machine learning (ML) developers know and love, fully integrated with the broader SageMaker Studio feature set. Choose Open CodeEditor to launch the IDE.

AWS Machine Learning Blog

APRIL 29, 2024

In 2024, however, organizations are using large language models (LLMs), which require relatively little focus on NLP, shifting research and development from modeling to the infrastructure needed to support LLM workflows. This often means the method of using a third-party LLM API won’t do for security, control, and scale reasons.

AWS Machine Learning Blog

FEBRUARY 29, 2024

Creating scalable and efficient machine learning (ML) pipelines is crucial for streamlining the development, deployment, and management of ML models. Configuration files (YAML and JSON) allow ML practitioners to specify undifferentiated code for orchestrating training pipelines using declarative syntax.

AWS Machine Learning Blog

FEBRUARY 12, 2025

Machine learning (ML) engineers must make trade-offs and prioritize the most important factors for their specific use case and business requirements. Enterprise Solutions Architect at AWS, experienced in Software Engineering, Enterprise Architecture, and AI/ML. Nitin Eusebius is a Sr.

Expert insights. Personalized for you.

We have resent the email to

Are you sure you want to cancel your subscriptions?

Let's personalize your content