Overcoming LLM Hallucinations Using Retrieval Augmented Generation (RAG)

Unite.AI

MARCH 5, 2024

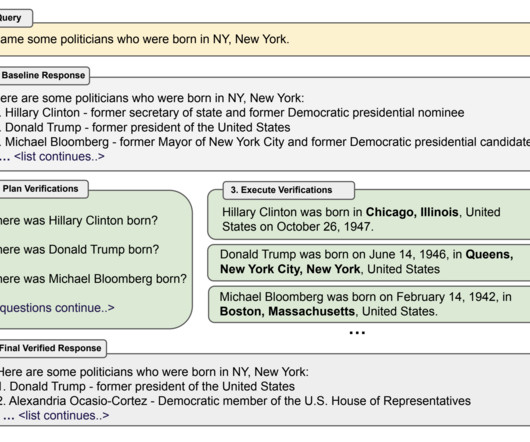

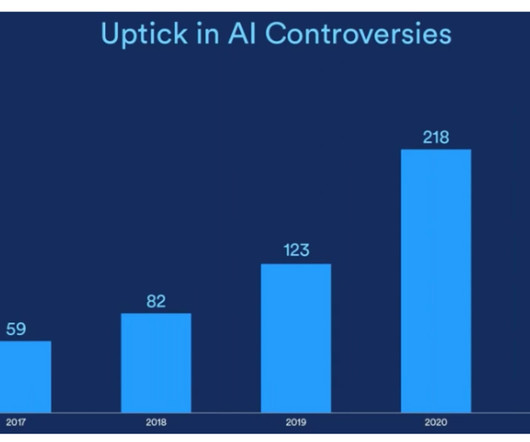

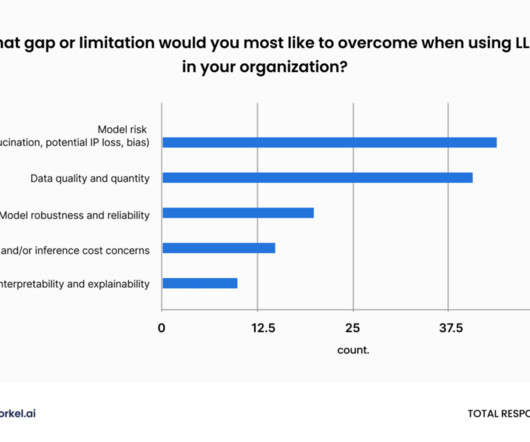

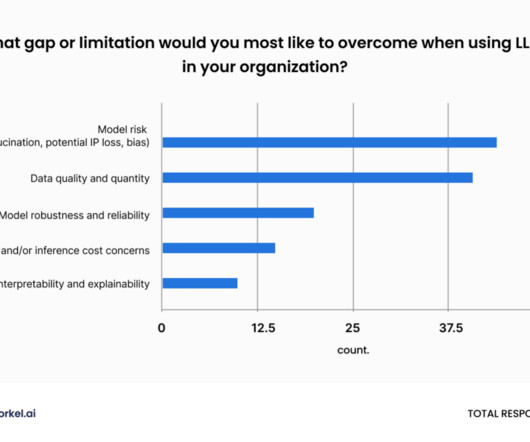

Just like humans might see shapes in clouds or faces on the moon, LLMs can also ‘hallucinate,' creating information that isn’t accurate. This phenomenon, known as LLM hallucinations , poses a growing concern as the use of LLMs expands. Let’s take a closer look at how RAG makes LLMs more accurate and reliable.

Let's personalize your content